Making the slowest "fast" page

This page was originally created on and last edited on .

Introduction

Manuel Matuzović wrote a blog post Building the most inaccessible site possible with a perfect Lighthouse score as a concept to show the limitations of automated testing tools. This idea came from a Tweet from Zach Leatherman, in which he suggested Free blog post idea: How to Build the Slowest Website with a Perfect Lighthouse Score. Manuel went another direction into the accessibility route, but after stumbling on the post again recently, and the Tweet that kicked it off, I was curious about the initial request and it seemed no one was stupid enough to actually waste time building such a site in the two years since the Tweet.

Well never having let an idea being stupid stop me in the past, I thought I'd have a go. It is a stupid, pointless exercise in many ways, but sometimes there's learnings in those. So, with all the recent focus on Core Web Vitals recently was it possible to build a slow website, with a perfect Lighthouse performance score of 100? And could we also ensure none of the web performance improvements triggers? And that last point, would make this more interesting.

Note: This post is not meant as a criticism of Lighthouse or the Core Web Vitals: as Manuel noted in his experiment, there are limits to what these automated tests can do, and in general I think they do a very good job of attempting to measure the billions of different web pages out there. But it is an interesting thought experiment to see what their limitations are.

How Lighthouse measures performance

I've written before about what makes up the Lighthouse performance score, and it is basically divided into two parts: 6 key metrics which directly are used to calculate the ultimate Performance score, and then a huge number of other audits marked as Opportunities or Diagnostics, which while they don't directly impact the score do offer advice on items that could be used to improve web page performance. There were some suggestions on Zach's Twitter thread about how to achieve the "perfect" slow site but many of these weren't viable if I wanted to avoid flagging in those other audits. Audits like not using large JavaScript libraries, minimising unused JavaScript and CSS and not using legacy JavaScript, ruled out just importing a load of poorly performing frameworks. Similarly audit items like insisting on lazy loading images, reducing main thread tasks and reducing large DOM sizes rules out just creating a page with millions of off screen images. So I had to get a little creative to create a "slow" website, that felt slow to the user, but didn't feel slow to Lighthouse.

So let's have a look at what Lighthouse does in an attempt to measure a website's performance, and let's start with the six core metrics that makes up the score, the impact of which can be seen in the handy Lighthouse Performance Scoring Calculator:

- First Contentful Paint (FCP) - This checks when the first contentful, or meaningful, item is drawn the screen. So it ignores background colours and images as they are not "contentful". We'd need to think of a way to draw something meaningful to Lighthouse, but without actually being that useful to the end user so the site still feels slow.

- Speed Index (SI) - This checks the visual progress of the page. If two pages load within 5 seconds, but the first loads 90% in the first second with the last 10% coming in at the end, and the second only loads 20% initially with the remaining 80% coming in at the end, then the first page is, quite rightly, seen as faster even though the total load time is the same.

- Largest Contentful Paint (LCP) - While FCP looks at the first meaningful paint, LCP looks at the largest meaningful item on the page. So a quick bit of text (e.g. a disclaimer) might fool FCP, but if the important part of the page (the actual text, or a banner image) takes longer then LCP will catch that.

- Time to Interactive (TTI) - This attempts to measure when you ca actually use a page. If it draws really quickly to satisfy all the above, but then clicking on anything doesn't do anything for another few seconds then can you really consider the page loaded? "Rage clicks" are a real thing when users are frustrated as they click on buttons (e.g. the hamburger menu on a mobile site) and nothing happens until the JavaScript kicks in.

- Total Blocking Time (TBT) - Similar to TTI but this looks at how busy the CPU is in general, even after that first TTI is reached.

- Cumulative Layout Shift Time (CLS) - This interesting new metric looks to identify those annoying moves of content as you attempt to read the page. We've all loaded articles, started to read and then that banner image, or advertisement finally loads and pushes the text all over the page, and you lose your place and have to find it again and get all frustrated.

What's important to realise here is that Lighthouse is attempt to measure several items that impact the perceived performance of a page. This has been an ongoing evolution from the early days of web performance when we just measured when the page was initially loaded through various older metrics like DOMContentLoaded, or load, or even Speed Index (which as per above is still useful and so still used). This is especially important as the web has moved on from simple static sites and many sites load additional content after those first two metrics using JavaScript APIs.

However these metrics are synthetic metrics or "lab metrics". We run a website through a tool with a set of (often predefined) criteria like network speed and capacity and CPU speed and spit out the results. The question is whether those lab criteria are representative of the experience being felt by users in the real world. Which leads us on to Core Web Vitals.

Lighthouse versus Core Web Vitals

Core Web Vitals were launched by Google in May 2020 as a way of Evaluating page experience for a better web. It takes many of the concepts refined in Lighthouse and similar tools, but is now trying to break them out of the web performance circles that knew about them to the wider web. In an effort to do that, they have stated that these metrics will be used as a ranking signal in its search results, suddenly shining a big spot light on web performance to website owners though Search Engine Optimisation (SEO). While web performance advocates (like myself) have long preached the indirect impact of slow performance websites, here Google is wielding its big, powerful SEO stick to directly correlate these. Slower sites will get less weighting in Google Search and since such a large proportion of traffic for most sites come from Google Search, this has caused quite a stir in the SEO community.

Core Web Vitals use some of the Lighthouse metrics, in the pretty much the same way, some in a slightly different way, and also adds one. As the name suggests they have tried to further distil even those six metrics discussed above into three "core" metrics that they think do a good job of representing page experience:

- Largest Contentful Paint (LCP) - which we discussed above and is the metric chosen to represent load speed. What's different about LCP in Lighthouse is it is being measure on real users. So if the vast majority of users to your site are on slower machines and networks than the Lighthouse instance you tested on, you will see higher LCP. And conversely if they are on faster conditions the CWV LCP will be better than you expected.

- First Input Delay (FID) - which attempts to combine TTI and TBT to give an indication of responsiveness to the website. The reason Lighthouse can't measure this, and has to proxy it with TTI and TBT, is that this is measure through real use. If a website is slow to become interactive, but most visitors are not trying to click buttons because they are reading the content then is that a problem? FID therefore tries to look at the actual delays users are experiencing rather than to guess delays they might experience. So it measures the time from when a user first attempts to interactive with a page (e.g. click a button) and then that event is processed.

- Cumulative Layout Shift Time (CLS) - this was discussed above but, like the other CWV, it is measured by real users and, controversially to some, it is measured throughout the lifetime of the page. This causes some problems for things like Single Page Apps, which do move about as the users uses the app. The Chrome team are looking for feedback as to how to improve this, as there are more than a few people concerned about negative impacts here shifts that may not be a real performance problem.

So the Core Web Vitals are looking at real field data, rather than synthetic lab data. This is collected anonymously through Chrome users that opt in to the Chrome User Experience Report (CrUX). There are a number of ways of getting at the data, but the easiest is by running a website though Page Speed Insights, which runs the Lighthouse Performance audit and also shows any CrUX data if a site is popular enough. For less popular sites, site owners can see the data in Google Search Console. It takes time to collect this from a sufficient number of users, so don't expect to see any immediate impact in either of these tools as you improve your website. Lighthouse will show immediate imp[act if you rerun it, but Core Web Vitals data will take up to 28 days for any changes to be visible.

Given that limitation, I'm going to concentrate on the Lighthouse tests, but am going to try to ensure the site I'm going to create should also pass Core Web Vitals. So for example I won't be looking to optimise just the initial CLS, but ensure there are no CLS impacts at any point in the page (so getting good Lighthouse and CWV scores) but still "feel slow" to the end user.

First slow test site

OK so let's get started. Getting a 100 Performance score in Lighthouse is actually quite difficult and, unlike the other categories, few websites make that cut. So rather than starting with a slow webpage, and tricking Lighthouse into think it is fast, let's come at it from the other end and have a fast webpage, and trick the users into thinking it is slow. The easiest way to have a 100 Performance scoring webpage have a single HTML page with everything (CSS, images and any JS) inlined directly into the page. I mean who doesn't like a literal Single Page App in web performance circles! :-) After that we'll just need to artificially make it slow again.

So let's look at the first metric we're trying to fool: FCP. So we need content drawn but not useful. Let's draw some text to screen really quickly. This will help pass FCP, LCP if we make it big enough, and ensures there are no CLS. However, we'll make it the same colour as the background so it is effectively useless. Then 10 seconds later we'll get some JavaScript to kick in and convert the font colour:

This lead to Lighthouse failing to calculate a score as it timed out before any content was drawn, which was great for 5 out of the 6 metrics, but caused an error for Speed Index. Apparently when nothing is drawn to screen Speed Index doesn't like it (divide by zero error?).

So OK let's hack it a bit more so a single full stop is drawn so something appears on the page to keep Speed Index happy, even if it is too small to really notice to most human eyes:

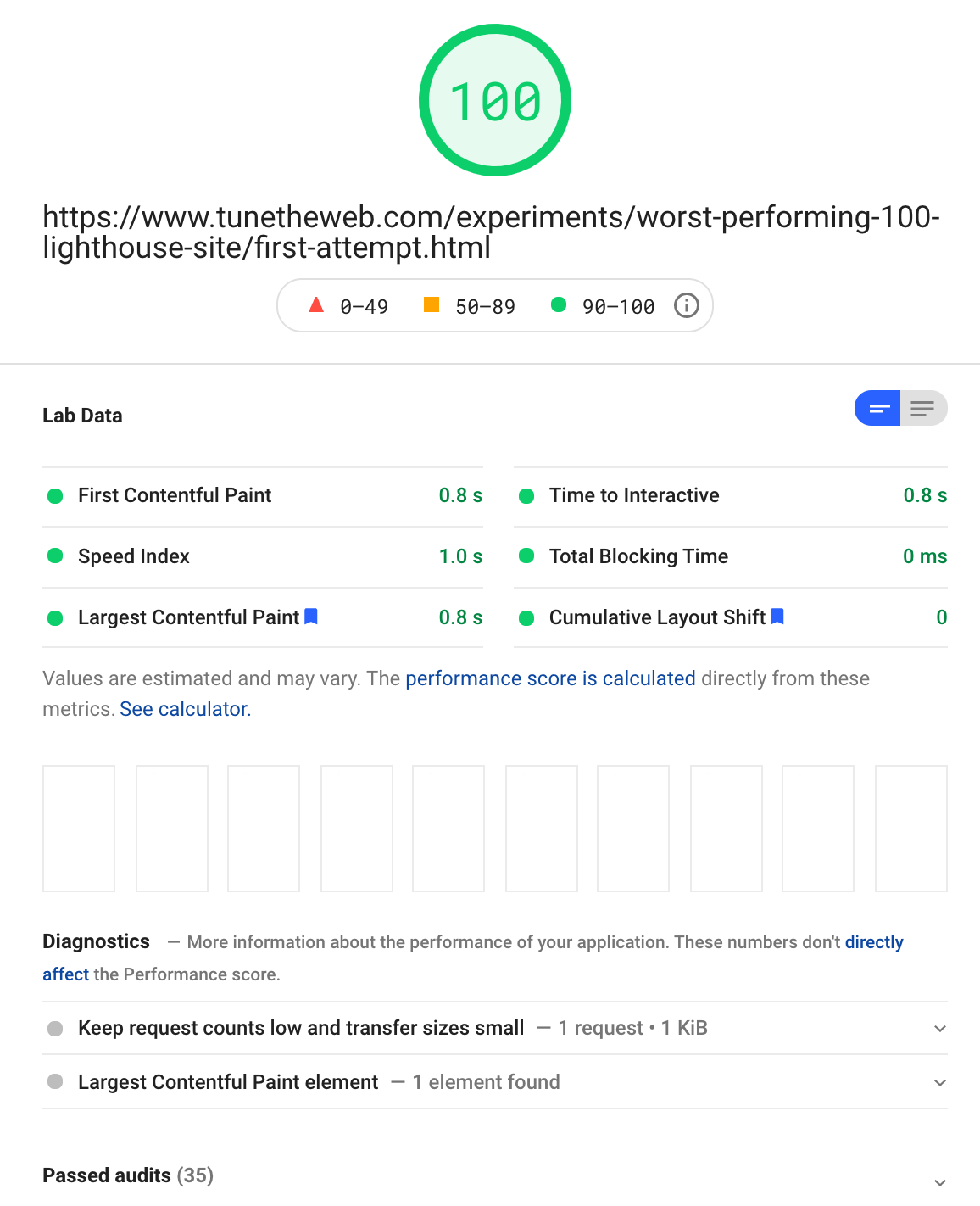

And we have success!

Near perfect scores in all metrics, even though nothing readable is actually is drawn to screen until well after the audit finishes! Also no other issues flagged apart from two informational pieces detailing the makeup of the site and the LCP candidate it decided upon.

Now how much of this is due to fooling Lighthouse and how much is due to the fact it just timed out? Well Lighthouse waits until FCP and LCP are completed and when there are no network requests, for a bit and then assumes (not unreasonably) the page is basically done. This makes Lighthouse run quicker for faster websites, and slower for slow websites rather than having a fixed time for all tests. So abusing that, is the easiest way to get around the trickier Speed Index audit — as that's the only audit that really checks when the whole page (at least above the fold) is drawn.

To prove this is a real LCP and FCP, if I reduce the timeout to 500ms, instead of 10,000ms then the text is switched to black inside the Lighthouse time limits then I get the exact same scores — except Speed Index which now registers that some content was drawn later. So it does look like the white-coloured font hack is enough to fool LCP and FCP. Surely a bug in Lighthouse and I imagine it'll likely be fixed at some point, so I wouldn't depend on it! Interestingly asking for the Core Web Vitals from Chrome (using the Core Web Vitals library) also shows that CWV is also fooled by invisible text - which is not surprising really as Lighthouse runs Chrome so likely getting the data from the same source.

Weirdly the Accessibility audit doesn't pick this up as insufficient colour contrast, which sounds like another bug in Lighthouse (the axe DevTools Chrome extension does pick this up). However, the intention is not to have invisible text - it is just a method to make the page unusable, while still fooling Lighthouse. So arguably it is not an accessibility issues (though should be since it wasn't resolved before Lighthouse finished).

But arguably are these really "slow" websites? To the user yes the content isn't visible until later so they are hanging around waiting for it but it has loaded in that time and it's a deliberate choice of mine to hide the content. I don't think this really simulates a slow JavaScript website doing lots of processing, as that would be picked up. I've litterally done a low CPU sleep to fake this into being a "slow" website. Still I think it meets the brief without too much effort.

Another alternative would be to have the LCP item drawn early, say the <h1> tag, and ensuring all the other onscreen content was smaller than that (so they didn't become the LCP object) and loaded later. Again Speed Index will pick this up these changes if you allow Lighthouse sufficient time to complete, but with some careful fiddling or enough space above the fold, it may be possible to still get a 100 score, since Speed Index only forms 15% of the Performance score.

An even easier answer is to drawn the "above the fold" content in its entirety, and then just make the "below the fold" content slow since Lighthouse (and FCP and LCP in CVW) only care about the initial above the fold content. But that seems a bit of a cheeky hack since it is abusing the known limitations of Lighthouse (ignore the fact that the rest of the hacks in this proof of concept are using lesser known Lighthouse hacks!).

Others have come up with hacks to fool the Core Web Vitals (including this cheeky LCP hack) and I'm sure that won't be the last we hear of this. When there are real business benefits on the line, and a whole industry around Search Engine Optimisation, you're bound to find a few shady tricksters trying to game the system. Much like other black hat SEO techniques this will surely carry more risk in the long term. Not to mention the fact that the reason that Google is trying to promote web performance is because users care about it. So tricking Core Web Vitals may work in the ultra-short term (until you're discovered), it is really not something to recommend at all.

A fuller test

So that was a very basic website, but taking those principals I created a slighter more complex one with nicer fonts (system fonts to avoid the web performance issues fonts can cause making 100 very difficult), an image and even a button that also has a click delay (to simulate some of the web performance frustrations TTI, TBT and FID are trying to measure). You can view the final slow page here and it also gets a perfect 100 Lighthouse score despite feeling slow. I reduced those delays to 5 seconds because I think the point is made and don't want to annoy you readers too much!

It is still a very basic website, but hopefully demonstrates that it is still possible to score a hundred with a "slow" website. Plus I think I've dragged this little exercise out way too long as it is anyway! As I say this was all just a bit of fun, and a learning exercise for myself to learn a bit more about Lighthouse and Core Web Vitals.

Conclusion

So, in conclusion it is possible to build a poorly performing website with a Lighthouse score of 100. However I think it is extremely unlikely you'd see such a website in the wild as you pretty much have to have a fast website you make slow — so it is entirely pointless, except as a proof of concept like this one was.

That's not to say there aren't websites scoring higher than they really deserve to. Again, any automated tool is going to be limited in what it can test, and be subject to edge cases that it possibly doesn't handle as well as it could.

Saying all that, it is quite exciting that we're growing what we can automatically measure to more accurately reflect what the end user feels as a performant website. The Lighthouse audits and Core Web Vitals are by no means perfect, and I don't think anyone will even come close to claiming they are intended to be, but they are a concerted effort to objectively measure the various aspects of what a fast website means. They will continue to grow and change, but it is very interesting what we already have. And while it is not that difficult to fool them as I've shown in this post, it is also not that easy either. The move to really measure real user usage and expose this to website owners is a positive step despite any limitations of the metrics, and the collection mechanism (Chrome only).

What's even more exciting that it is getting so much attention with the launch of Core Web Vitals and its upcoming impact on search rankings. Google gets a lot of stick due to the dominant position it is in and the undue influence it can have on the web, but this is one I'm firmly behind. Let's just hope it leads to faster websites all round and any hacks around it aren't allowed to game the system. But ultimately this is but one part of search engine ranking, and no matter how important it is (and personally I think it is being over hyped at the moment, but am quite happy about that), no one will repeatedly come to a bad or useless website no matter how well it ranks. Use the metrics to guide you, but it is the users that count at the end of the day.

Want to read more?

More Resources on Lighthouse and Core Web Vitals

- The original Tweet that kicked this off from Zach Leatherman.

- Building the most inaccessible site possible with a perfect Lighthouse score by Manuel Matuzović, that looked at doing the same for the Accessibility audit.

- An introduction to Google Lighthouse.

- Lighthouse's GitHub repo for raising and tracking issues.

This page was originally created on and last edited on .

Tweet